Hey! So, let’s talk about latency. You know that annoying lag when you’re trying to do something important online? Yeah, it’s a total buzzkill.

Now, if you’re using Amazon ElastiCache, that can be a game-changer. But sometimes things still slow down, right? And that’s where we need to step in and tweak a few things for better performance.

Imagine the feeling of everything just working smoothly. It’s like gliding on ice instead of trudging through mud! Seriously, let’s figure out how to kick that latency to the curb together.

Maximizing Application Performance at Scale with Amazon ElastiCache: A Comprehensive Guide

Maximizing Application Performance with Amazon ElastiCache is all about reducing latency. You want your applications to run smoothly, right? Well, using a caching solution can be a game changer. Imagine working on a project that keeps lagging because every time you access the database, it takes forever! That’s frustrating. ElastiCache can help make that pain go away.

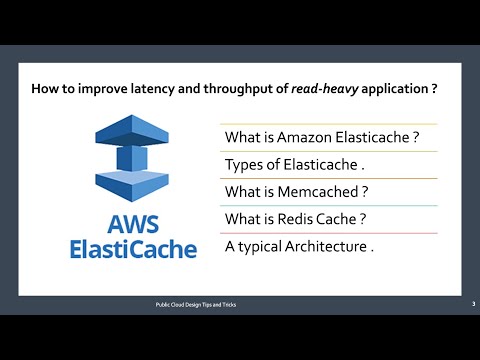

So, what exactly is Amazon ElastiCache? Basically, it’s a web service that makes it super easy to set up, operate, and scale in-memory caches in the cloud. It supports two popular caching engines: Redis and Memcached. Each has its strengths and weaknesses depending on your needs.

When we talk about reducing latency specifically in ElastiCache, there are a few things to keep in mind:

- Choose the Right Engine: Depending on what you need, pick Redis for more advanced data structures and features like persistence or Memcached for simple key-value pairs.

- Optimize Cache Size: Don’t just throw resources at the problem; size your cache effectively! If it’s too small, you’ll end up with cache evictions that can slow down performance.

- Use Parameter Groups: Fine-tune configuration settings through parameter groups for better performance. For example, adjusting the max memory policy can make a difference.

- Implement Cluster Mode: If you’re dealing with large datasets or traffic spikes, consider enabling cluster mode. It shreds your cache into smaller pieces so loads are balanced better.

- Migrate Data Wisely: When moving data into ElastiCache from other databases or caches, use bulk operations instead of single inserts to speed things up.

- Caching Strategy Matters: Develop an effective caching strategy by determining what data should be cached and how long it should remain there!

Think of caching like having quick access to snacks instead of waiting for a full meal. If your application often needs certain data—like user sessions—caching this in ElastiCache means users see things happen instantly instead of waiting on database queries.

Now let’s talk about monitoring performance. Use AWS CloudWatch metrics to track cache hit rates and memory usage. This helps you quickly identify when something’s off—like if you see low hit rates which could indicate ineffective caching or that your data isn’t stored as well as it could be.

Another little trick is leveraging SAS (Sharding As Service). This helps distribute data across multiple nodes which not only reduces load but also minimizes latency! This way if one node goes down, others still serve requests seamlessly without breaking a sweat.

Lastly, be sure to keep testing! Changes you implement might seem good at first glance but always monitor their impact over time. Regularly assess how well your setup is performing because tech evolves quickly.

In short? By strategically using Amazon ElastiCache and being proactive about optimization, you can seriously enhance application performance while keeping latency low!

Understanding ElastiCache Redis Latency: Causes, Impacts, and Solutions

When it comes to ElastiCache Redis, latency is something you really want to keep an eye on. Latency, in simple terms, is the time it takes for your request to reach Redis and for the response to come back. High latency can lead to sluggish application performance, which nobody wants. So, let’s break down what causes this latency, its impacts, and what you can do about it.

First off, let’s chat about the causes of high latency in ElastiCache Redis:

- Network Issues: Sometimes it’s just a matter of bad connections. If there’s a problem with your network or your instances aren’t close enough geographically, you might feel the delay.

- High Load: If too many clients are trying to access the same Redis instance at once, things can slow down. Think of it like rush hour traffic—you’ve got way too many cars on the road.

- Memory Constraints: Redis runs in-memory, so if you’re pushing it too far with data that doesn’t fit comfortably into memory, performance can dip.

- Configuration Errors: Misconfigurations like not using enough shards or incorrect timeout settings can seriously impact response times.

Now that we know some of the culprits behind latency issues, let’s talk about how this affects you and your applications. You know when you’re trying to load a page and it’s taking forever? That’s basically what can happen in your app if you’re facing high latency. Users get frustrated; they might leave your site altogether—like missing out on a great concert because traffic was awful.

The performance impacts can ripple through your whole operation:

- User Experience: Slow load times lead to unhappy users. If they’re waiting around too long, they’re likely to bounce.

- Increased Costs: If users abandon transactions due to lagging performance, that could mean lost revenue.

- Bottlenecks: Any delay can create bottlenecks that affect other parts of your system or service quality.

So now that we’ve established why latency is an issue, what about solutions? Here are some practical fixes that could help reduce that pesky delay:

- AWS Region Selection: Choose an AWS region that’s closest to your user base. This helps cut down on network-related latency.

- Add More Nodes:You might want to consider scaling up by adding more nodes or shards for better distribution of requests across different servers.

- Tuning Parameters: Look into tweaking configuration settings like connection pooling or timeout limits based on app needs and user patterns.

- Caching Strategy Improvements:This might seem obvious but review how you’re caching data! Make sure frequent queries hit cache rather than going back to the database every time.

Just remember though: monitoring tools are vital! Keep an eye on metrics so you know when things start dragging down performance.

In short, understanding what causes latency in ElastiCache Redis—and tackling those issues head-on—can save you from frustrating delays and ensure smooth sailing for your applications. Do keep these points in mind as they could really make a difference!

Understanding Elasticache High Latency: Causes, Impacts, and Solutions

So, you’re dealing with some high latency in Amazon ElastiCache, huh? That can be really frustrating! It’s like waiting for a webpage to load when you’re super excited to see what’s there. Seriously, high latency can slow down your applications and mess with user experience. Let’s break it down.

What causes high latency in ElastiCache? Well, there are a few key culprits:

- Network issues: The connection between your application and the cache can get sketchy. If your data centers or regions are far apart, you might notice some lag.

- Resource limitations: Your instance type could be too small for the workload. If you’re trying to do too much with not enough resources, it’ll bottleneck.

- High traffic: An unexpected spike in user requests can overwhelm the cache. It’s like trying to fit too many people into a tiny room.

- Poorly optimized queries: If you’re not structuring your data access well, every request might take longer than it should. Think of it as asking for directions but going off on a wild tangent first!

The impacts of these latency issues? For starters, users get impatient when pages don’t load fast enough, which could lead to reduced satisfaction. Imagine clicking an app and waiting ages—it’s frustrating! Plus, if you run an e-commerce site or something similar, every second counts towards conversions. High latency can directly hurt your bottom line!

Now, how do we tackle this?

- Monitor performance: Use AWS CloudWatch to keep an eye on metrics like response times and CPU usage. It gives you insight into what’s going wrong.

- Select appropriate instance types: Make sure your ElastiCache setup matches your needs. Sometimes just moving up to a larger instance type can make all the difference.

- Add replication and clustering: Distributing workloads across multiple nodes helps manage traffic better and reduces strain on any single point.

- Tune configuration settings: Adjust parameters based on recommended best practices for Redis or Memcached—whatever you’re using—tailoring it to how you operate!

A little anecdote here: I once set up a caching system for an online game launch weekend—it was chaos with thousands logging in at once. High latency hit us hard at first until we pumped up the instance size and optimized our queries. Once we did that? Smooth sailing! Everyone was back in the game without a hitch.

The key takeaway? Keeping an eye on performance metrics and being ready to adjust things ensures users have the best experience possible without those annoying lags getting in their way!

You know, the other day I was working on this project that heavily depended on database interactions, and man, did I run into some latency issues that made my hair stand on end! It got me thinking about how crucial reducing latency is, especially when using something like Amazon ElastiCache.

So, you might be wondering what exactly latency is. In simple terms, it’s the delay before a transfer of data begins following an instruction. Picture waiting for your friend to text you back when you’re just dying to know where to meet up. That’s kind of what latency feels like in the tech world—a frustrating pause!

When using Amazon ElastiCache, which is designed for in-memory data caching, you’d want it to be as snappy as possible. Why? Because no one wants a sluggish app; it kills the user experience. You want those responses back instantly! And let’s be real, when your app is running slowly, users can get pretty impatient.

One way to tackle this is by optimizing your cache strategy. Like if you’re frequently fetching the same data over and over again from your database—it’s time to store that in ElastiCache! Doing this not only cuts down on latency but also eases up database load. It’s like grabbing a snack from the pantry instead of making a full course meal every time you’re hungry.

Another thought—it’s super important to set appropriate expiration times for your cached items. Too short and you’ll end up fetching data more often than you’d like; too long and your users might see stale information. Finding that sweet spot is essential for keeping things fresh yet efficient.

And then there’s choosing the right instance types and scaling correctly according to your needs. If you’re starting small but expect growth later on, think about how you’d want it set up now so it won’t be a headache later down the road. Kinda like planning ahead for those unexpected guests!

Honestly though, these latencies can really lead you down a rabbit hole if not tackled properly. I remember being knee-deep in troubleshooting one night and thinking it was just another “quick” fix until I found myself hours deep into performance tuning—joy! So yeah, learning how to reduce latency isn’t just some boring tech talk; it’s about making your applications smooth and responsive for users who just want things to work fast without interruptions.

In essence, reducing latency with tools like Amazon ElastiCache can make a huge difference in performance—and help keep both developers and users happy!