So, let’s chat about GPUs for a sec. You know, those little powerhouses that make your games look gorgeous and your videos smooth? Yeah, those.

Ever wondered how your computer figures out which GPU to use? Or why sometimes it seems to forget what it has? It can be a little wild, honestly.

I remember the first time my laptop just wouldn’t recognize my fancy graphics card. I was like, “What do you mean you don’t see it?” Talk about frustrating!

Anyway, understanding how GPU detection works in modern operating systems is pretty neat. It’s all about making sure your machine runs the way it should. So grab a drink and let’s untangle this tech puzzle together!

Discover the Top GPU in the World: Unveiling the Best Graphics Card of 2023

So, let’s chat about GPUs. You know, those graphics processing units that make your games look all shiny and help with video rendering and stuff? In 2023, a ton of folks are hyped about what’s available out there. But instead of getting into who’s the best, I think it would be cool to talk about how these graphics cards play nice with modern operating systems and how you can even check if your system recognizes them correctly.

First off, what is a GPU? It’s basically a chip designed to handle all things visual on your screen. When you’re gaming, watching movies, or even just scrolling through photos, the GPU is hard at work making everything look smooth and beautiful.

Now, why should you care about detection? Well, if your operating system doesn’t recognize your GPU correctly, you might not get the best performance possible. That can lead to stuttery gameplay or even graphical errors. Ugh! Nobody wants that.

When you install a new graphics card, most modern operating systems—like Windows 11—are pretty good about finding it without any hassle. But sometimes things can go sideways. You may need to manually check if it’s detected correctly.

Here’s how you can do that:

- Open Device Manager: Right-click on the Start button and select Device Manager from the menu.

- Expand Display adapters: You’ll see your GPU listed there if it’s properly detected.

- Check for errors: If there’s a yellow triangle next to your GPU, uh oh! That means there could be an issue.

What happens next? You may want to update drivers or ensure it’s seated well in the PCIe slot inside your PC. Ever had that moment when you’re trying to fix something but realize you didn’t even plug it in right? Yeah, we’ve all been there!

When it comes down to which GPU is considered «the best», opinions vary so much! Some swear by NVIDIA’s latest offerings like the RTX 4090 for top-tier performance. Others might point to AMD’s Radeon 7900 XTX for its competitive pricing and capabilities. The thing is—or at least my take—is what works best really depends on what you’re doing with it.

For example:

- If you’re into gaming at high resolutions with ray tracing enabled? You’ll want something like an RTX 4080 or higher.

- If video editing or graphic design is more your jam? Maybe look at something that optimally supports CUDA cores for faster rendering.

And let’s not forget software compatibility; some applications run better on one brand over another due to optimization. It’s wild how intricate this world can get!

In short, knowing how to check if your GPU is recognized by your OS can save you from performance headaches down the line. And hey—we all want our tech running smoothly so we can focus on enjoying our favorite games or projects without glitches ruining the vibe!

Understanding GPU Architecture: Key Concepts and Innovations in Graphics Processing

So, let’s break down what GPU architecture is all about, especially how it connects to modern operating systems and their ability to detect these graphics powerhouses. You know, GPUs or Graphics Processing Units aren’t just fancy bits of tech; they’re vital for everything from gaming to deep learning.

GPU Basics

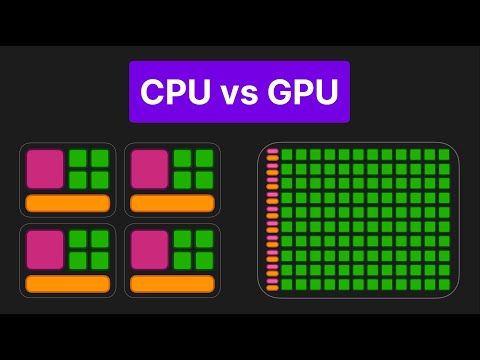

At the heart of a GPU is its architecture. Think of it as a complex engine designed specifically for graphics processing. Unlike CPUs, which handle general tasks, GPUs are built to crunch numbers in parallel—like having hundreds of workers doing the same job at once! This makes them perfect for rendering images and video.

Parallel Processing

This is where things get cool. A GPU has thousands of smaller cores rather than a few powerful ones. This allows it to take on multiple tasks simultaneously. Imagine trying to paint a picture with one giant brush versus using hundreds of tiny ones—it’s way faster with the little brushes! That’s basically how GPUs work when they process large sets of data.

Memory Architecture

You’ve got different types of memory in a GPU too! There’s VRAM (Video RAM), which is like the short-term memory that stores data while you’re working on it. The faster this memory can be accessed, the better your graphics performance will be. And, as games and applications get more demanding, having enough VRAM becomes essential.

Modern Innovations

Recent innovations have introduced features like Ray Tracing and AI-driven upscaling. Ray Tracing mimics how light interacts with objects in real life, creating stunningly realistic visuals—just think about how amazing reflections look in modern games! AI-driven upscaling improves lower-resolution images by adding details based on learned patterns; so when you see crisp graphics in older titles remastered today? That’s AI magic right there!

GPU Detection in Operating Systems

Now onto something practical: how do operating systems spot these GPUs? When you boot up your computer, your OS runs specific protocols to detect hardware components like your GPU. It checks things like the device ID and manufacturer information to understand what kind of GPU it’s dealing with.

Drivers Matter

To communicate effectively with the OS, GPUs rely on drivers—software that acts as a translator between your hardware and software applications. If your drivers aren’t updated or compatible? You might end up with poor performance or weird graphical glitches—nobody wants that during an intense gaming session!

The Bottom Line

So yeah, understanding GPU architecture helps you appreciate just how much power these devices unleash for graphics processing today: from what they do behind the scenes to the innovations that keep evolving tech forward. And knowing how your operating system interacts with them can help troubleshoot issues when they arise—because let’s face it, we all want our tech to run smoothly without hiccups.

Step-by-Step Guide: How to Check Your Graphics Card on Windows 10

Checking your graphics card on Windows 10 is easier than you might think! Whether you’re into gaming, video editing, or just want to make sure your PC is running smoothly, knowing what GPU you have can come in handy. Here’s how you can do it.

First off, right-click on the Start menu. This little menu is your gateway to all sorts of tools. Once you’ve done that, look for Device Manager and click it. Device Manager is basically a list of all the hardware in your computer.

Now, when the Device Manager opens up, you’ll see a list of categories. You’ve got stuff like Display adapters, which is where all the magic happens! Click on that little arrow next to it to expand it and—boom!—you’ll see your graphics card listed there.

If you’re wondering why this matters, well, let me tell you a story. I once had a friend who bought a fancy new game only to discover her old laptop couldn’t run it because she hadn’t checked her GPU first. So yeah, knowledge saves you from playing “Is my PC good enough” guessing games!

Now if you want more detailed info about your graphics card—like the model number and specifications—there’s another way to check it out. Press Windows key + R together to open the Run dialog box. Type in dxdiag and hit Enter. This will open the DirectX Diagnostic Tool.

In this tool, go to the Display tab. You’ll find everything from manufacturer info to memory size listed here. Seriously, it’s like a treasure chest of information for gamers and techies alike!

If you’re still itching for even more detail (I get it!), try using some third-party software like GPU-Z or Speccy. These programs can give you real-time data about temperatures and usage stats if you’re monitoring performance while gaming or working heavy applications.

So there you have it! Checking your graphics card can be done in just a few clicks. Just remember: knowing what’s under the hood not only helps with upgrades but also with troubleshooting those pesky errors down the line! If something’s off with performance or if certain games won’t run at all—you know where to look first!

Sometimes, when you fire up your computer and want to play the latest game or edit a video, you might wonder how it all works behind the scenes. One of the unsung heroes of this whole operation is the GPU, or Graphics Processing Unit. It’s what makes those stunning graphics and smooth animations possible. But how does your operating system actually figure out what kind of GPU you have? That’s a pretty cool thing to understand, I think.

So, when your computer boots up, it goes through something called hardware detection. This is basically the OS checking out what’s installed and ready to roll. The OS communicates with the GPU through a process called enumeration; it’s like asking, “Hey, are you there? What can you do?” The GPU responds with its specs and capabilities using something called device drivers—those little programs that let your OS talk to the hardware smoothly.

Now think about times when you’ve plugged in a new piece of tech and it just… works right away. That’s because modern operating systems have these built-in databases of drivers for countless devices. If your OS recognizes your GPU model from this list, it’ll set things up without breaking a sweat.

But what if it doesn’t recognize it? Ugh! That can be a headache! You might experience performance issues or even crashes because the OS can’t fully harness the power of your GPU. It’s like trying to ride a bike with flat tires—it just doesn’t feel right! So here is where manual driver installation comes in handy. You can usually find drivers on the manufacturer’s website—sometimes it’s tedious but totally worth it for that extra performance boost.

It’s interesting too how different operating systems—like Windows, macOS or Linux—handle GPU detection in their own ways. They each have different methods for communicating with hardware. For instance, Windows often requires proprietary drivers for full functionality while Linux community-driven solutions shine when it comes to open-source options.

Thinking back on my own experiences with GPUs—I remember building my first PC as a teenager to play games better than my friends. After hours of assembling parts and wiring everything up, I felt like a superstar—until nothing showed up on my monitor! Cue panic mode! It turned out that my new GPU required an update that I hadn’t installed yet. Once I sorted that out though? Pure bliss watching those graphics come to life!

In summary, understanding how GPUs are detected isn’t just techy mumbo jumbo; it’s crucial for getting the best performance from your hardware and ensuring everything runs smoothly. Next time you’re enjoying some high-def gaming or sleek video editing, remember all those behind-the-scenes chats happening between your system and that nifty piece of technology doing all the heavy lifting!